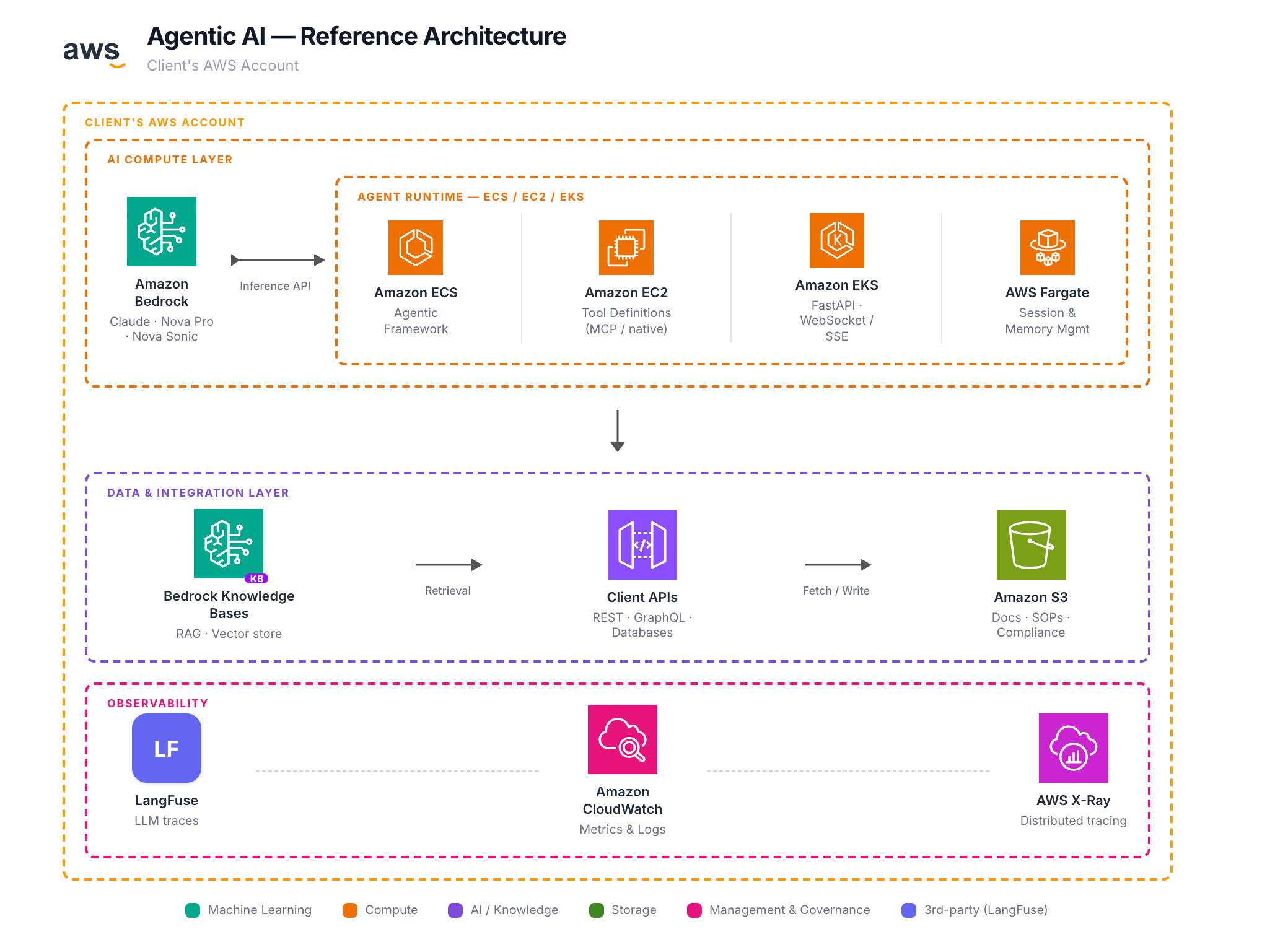

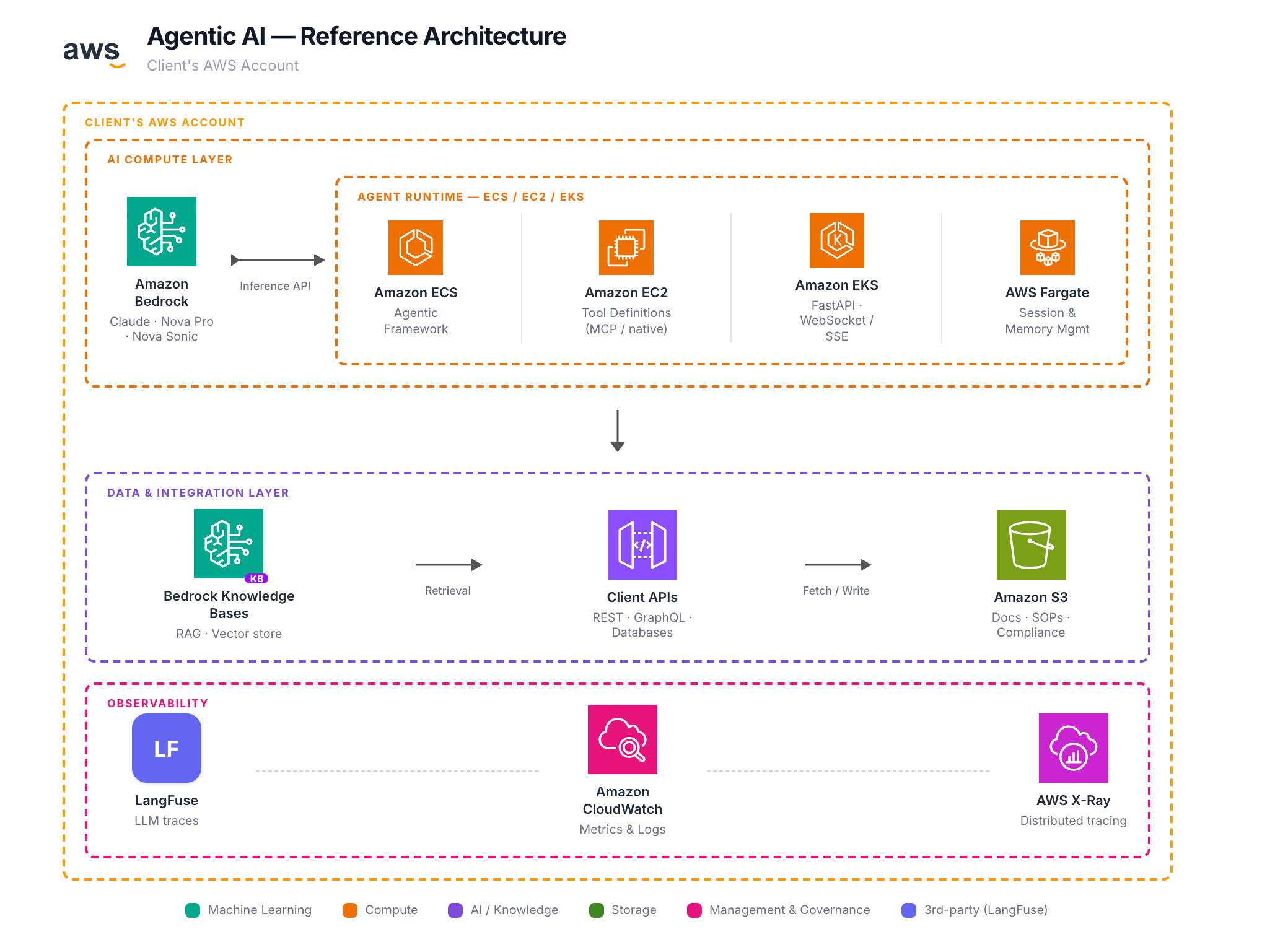

Architecture

Everything runs inside the client's AWS account. No data leaves the perimeter.

All inference stays within AWS. Bedrock models run in the client's region — no data sent to third-party model providers.

Security-First Agentic AI — Built on Your Data, Deployed in Your AWS Account

We deploy custom GenAI agents that operate on your own APIs, documentation, and compliance rulesets to automate manual tasks. Everything runs inside your AWS account — no data leaves the perimeter. Amazon Bedrock as the inference backbone, production-grade frameworks, working system in weeks.

A self-contained AI backend that connects to your existing systems and automates workflows end-to-end.

Foundation models (Claude, Nova Pro, Nova Sonic) — no self-hosted GPUs, pay-per-token

Strands, LangGraph, CrewAI, ADK, Swarm, Autogen — we match the framework to the use case

Agents call your APIs, query databases, search documents, trigger workflows via MCP or custom tools

Ingests internal docs, compliance rulesets, SOPs — grounds every response in your own data

Real-time token-by-token responses, bidirectional voice (Nova Sonic), vision (Nova Pro)

Trace every agent step, token cost, latency, tool call success rate

Real deployments, real integrations, real users.

AI concierge deployed for a hotel management platform. The agent authenticates against the client's Symfony API, calls booking/guest/room-service endpoints via tool use, and responds to guests in real time over SSE streaming. Framework: Google ADK + Amazon Bedrock (Claude). Handles message routing, activity booking, room service orders, and multilingual guest support — all grounded in live data from the client's own backend.

AI learning assistant for an EdTech platform. The agent analyses the learner's screen in real time (frame capture → Nova Pro vision), provides step-by-step guidance streamed token-by-token over WebSocket, and supports bidirectional voice interaction via Nova Sonic. A guideline agent prepares session context using web search (MCP tools), and a verification agent filters hallucinated instructions before they reach the user.

Agents that ingest regulatory documents, internal SOPs, and compliance rulesets into Bedrock Knowledge Bases, then answer questions, flag non-compliance, and draft responses grounded exclusively in approved source material. No hallucination — every claim is traceable to a source document.

A private, compliant general-purpose assistant deployed inside the client's AWS account — the organization's own ChatGPT, with no data leaving the perimeter. Employees interact through a branded web UI. Access is controlled via SSO, conversations are logged for audit, and Bedrock Guardrails enforce PII redaction and grounding checks.

| Tool | What It Does |

|---|---|

| RAG | Searches internal knowledge bases — policies, SOPs, product docs — answers with citations |

| Database Explorer | Queries databases (RDS, Redshift, DynamoDB, Athena) in natural language — read-only, scoped by IAM |

| Chart Generator | Produces charts from query results or uploaded data — rendered inline |

| Doc Summarizer | Ingests PDFs, spreadsheets, or slides and produces structured summaries |

| Web Search | Searches Confluence, SharePoint, or S3-hosted docs via MCP connectors |

| Code Interpreter | Executes Python in a sandbox for data analysis, calculations, or file transforms |

Everything runs inside the client's AWS account. No data leaves the perimeter.

All inference stays within AWS. Bedrock models run in the client's region — no data sent to third-party model providers.

Zero-trust by default. Every agent scoped, every action logged.

Framework-agnostic. We select the right tool based on use case complexity and the client's stack.

AWS-native agents with built-in Bedrock integration, tool use, and memory

Complex multi-step workflows, conditional routing, human-in-the-loop

Multi-agent collaboration — role-based agents working as a team

Rapid prototyping with built-in web UI, session management, tool orchestration

Lightweight agent handoff patterns, conversational routing

Research-oriented multi-agent conversations, code generation pipelines

All frameworks are deployed with Amazon Bedrock as the inference provider. No dependency on external model APIs.

Discovery to production in 4–6 weeks.

Everything runs in the client's AWS account, no third-party model providers

Working agent with real tool integrations, not a chatbot demo

We pick the right tool for the job, not the one we're locked into

Bedrock is pay-per-token, no GPU reservation, no idle compute

Every agent decision is logged, every tool call is traceable, every output is reviewable

This offering applies to any organization on AWS that needs to automate knowledge work, enforce compliance, or augment customer-facing operations with AI — without sending data outside their cloud perimeter.